Question 1

Below is the procedure for reducing the processing time of a FME/FMW script. The point of proposing this assignment was to get an understanding of different data structures for GIS data formats.

Below are the procedures for cleaning up the saf format:

- Unzip the .saf in 7zip of similar – by the way if you set up a default editor in 7zip, you can edit the necessary file in the dataset without having to extract the files (Scott can illustrate)

- The zip file contains all the data layers and some as well as config and metadata files.

- Edit the file called exports.dir to remove the layers you are not interested in decompressing for the FME translation.

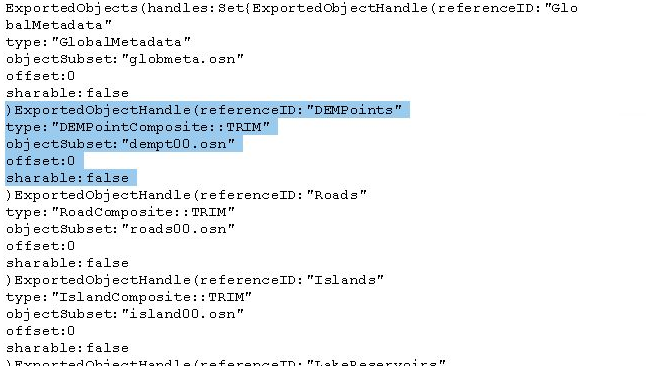

- To remove a layer – start the closing parenthesis “)” at the beginning of the layer description and remove the lines up to and including “false”

- check out the image below illustrating the DEM points to be removed:

- Once the section is removed –> save the file –> zip the files back up into a .saf file

- Open the FMW file for workbench – in our case saif2spatialite_geotiff_csv.fmw

- Choose your altered .saf file as the input and run the workbench script

NOTES:

The osn files represent each layer and some layers are often spread across several osn files to keep them below 2 MG is size (old file type). Removing those files will break the script in workbench. If you remove the files and the layer entry in the external.dir file it will work – the key is to edit the external.dir file properly.

Here is the link to the tutorial where Scott describes the method of reducing the number of files – the saf specific location begins around the 50 minute mark:

Question 2:

Below is the code Matt has created illustrating proper a working BASH script to convert the images from one folder to a second folder containing images having all the same projection. Please read the comments to understand the logic of the script

[30/10 15:53] Matt McLean

# Convert all images in originals folder to Web Mercator Projection

# Get a list of all files in images folder, save to variable files

root_folder=~/data/assignment1

files=$(ls $root_folder/originals/)

for file in $files

# Do an operation on every file in the directory

do

# Use gdalinfo to extract projection

# gdalinfo pipes to grep where we find the line starting with PROJCS, awk takes value inside quotes

projection=$(gdalinfo $root_folder/originals/$file | grep PROJCS | awk '{split($0, a, "\""); print a[2]}')

echo $projection

if ["$projection" !="WGS_1984_Web_Mercator" ]

then

# If Not already web mercator gdal warp it

gdalwarp -t_srs EPSG:3857 $root_folder/originals/$file

$root_folder/mercator/$file

else

# If already correct copy file

cp $root_folder/originals/$file

$root_folder/mercator/$file

fi

# fi, is if backwards and tells the computer we do not have conditions past here

Edited<https://teams.microsoft.com/l/message/19:2294cc9cbc374553a343ca619b889862@thread.tacv2/1604098405515?tenantId=a7ee9abb-4885-492e-b331-a6fa051a39bb&groupId=13e39cd2-1c72-4a4c-a2bd-db188e6ad21f&parentMessageId=1604098405515&teamName=GEOG 413&channelName=Lab&createdTime=1604098405515>Question 3

Check out the video below that allows users to change the default export format. I reality the Geopackage format that is the default for QGIS is a spatialite and the sqlite is not a spatialite database.

Spatialite is based on sqlite and geopackages are based on spatialite.

Question 4

Here are the steps Scott took to find the software and install it. You can also use synaptic to install by performing a simaliar search to find the installation package

- Open a termial window in your VM and run the following commands

- search for the package

- aptitude search sqlite | grep browser

- I found a package called sqlitebrowser

- then install the package

- apt install sqlitebrowser

- done

NOTE:

The grep command – as you will find the more you use Linux is one of the greatest commands ever. It is used to search for text values from a search or within files. The “|” is called a pipe and passes the results of one command on the left of the pipe to a command on the right

Question 5

By investigating a spatialite database, you will find there are several places you can explore to find the layers that are present in the database.

As mentioned above, a Spatialite database is a SQLite database, but has certain tables that extend the SQLite database format to enable the storage of spatial entities (objects) and the understanding of these objects by GIS software. This is analogous with PostgreSQL and PostGIS, as PostGIS is an extension for PostgreSQL.

There are several tables that all have information regarding the available layers:

- Spatialindex – a table that lists the layers and the index associated with spatial index within the database (Scott can explain)

- geom_col-ref_sys – a table that defines the projection of the layer

- geometry_columns – the most popular table used to find available layers. It defines the type of geometries (i.e. point line polygon) and the dimension of the layer (2D vs 3D). It too has projection information (just an EPSG value)

- geometry_columns_auth – the authorization of the layer (Permissions). Whether the layer is read only for instance

- geometry_column_statistics – Information stored about the layer, its extent and number of features per layer

- geometry_columns_time – records activity on the data. Following the database activities we looked at lab (insert, delete or update)

NOTE:

There area also replicant tables of the ones above with the preface vector_.. These tables contain the same information as the ones above.

Many of the tables mention an EPSG code and geometry_column. We will discuss EPSG codes further in lecture, but the are basically and index/list of globally defined and recorded projections.

The geometry_column is the name of the column the layer holds its geometries (the feature coordinates). This is usually written as Well Known Text of Well Know Binary (WKT or WKB). Each software that write to spatial enabled databases has a default name for the columns, but the user can usually rename it. Back when Scott started with PostGIS – the standard was “the_geom”.